Editor’s Note: This paper is part of a research project, “Countering AI Disinformation and Implications for the US-ROK Alliance,” conducted by the Stimson Center’s Korea Program and generously sponsored by the Korea Foundation. For additional papers in this series, click here.

Kalliopi Mingeirou is currently the Chief of the Ending Violence against Women Section at UN Women in New York. She has been leading global initiatives, including diverse interagency initiatives, on prevention of and responses to violence against women and girls in public and private spaces. She is a lawyer by training and holds an LL.M. on public international law. Prior to joining UN Women, Ms. Mingeirou worked as a lawyer in Greece, and at the international level, she worked for UN agencies, as well as INGOs, in the areas of human rights, women’s human rights, and refugee protection in several countries both in development and humanitarian settings.

Yeliz Osman is a Policy & Programme Management Specialist within the Ending Violence Against Women Section based in UN Women’s headquarters in New York. She is currently the global programme manager of the ACT to end violence against women and girls programme, which is focused on strengthening women’s rights movements, coalition building, and advocacy to end violence against women. Prior to this, Yeliz worked as a Regional Advisor on Ending Violence against Women in UN Women’s Regional Office for the Americas and the Caribbean, and she also spent five years working in the UN Women Country Office in Mexico where she managed the Safe Cities and Safe Public Spaces for Women and Girls Programme. Yeliz has more than 17 years of experience in preventing and responding to violence against women and girls working in different capacities including policy, programme management, and as a practitioner. Yeliz holds a Masters in Understanding and Securing Human Rights (Human Rights Consortium, University of London).

Raphaëlle Rafin is a Policy Specialist in the UN Women Ending Violence against Women section in New York. Prior to that, Raphaëlle worked for the UN Women multi-country office for the Maghreb as the EVAW coordinator, as a justice and human rights officer for the French Embassy in Morocco, and as a legal researcher on public international law and international human rights law cases before the United Nations Human Rights Council, the International Criminal Court, and the European Court of Human Rights. Raphaëlle holds an LL.M in Public International Law from the University of Leiden and a MA in International Relations from Sciences-po Lille.

By Jenny Town, Senior Fellow and Director, 38 North Program

Artificial intelligence (AI) is rapidly transforming societies, economies, and political systems. While AI technologies hold promises for innovation and social good, they are also reshaping the nature, scale, and intensity of harm experienced by women and girls. One of the most pressing and underexamined consequences of this transformation is the way AI is exacerbating existing forms of violence against women and girls (VAWG), particularly through disinformation and image-based abuse. These harms are not confined to the digital sphere; rather, they increasingly translate into offline threats, intimidation, exclusion, and physical violence.

This paper examines the impact of AI on violence against women and girls, with a particular focus on disinformation, online abuse, and the erosion of women’s participation in public life. It draws on emerging evidence, normative developments, and policy dialogues to explore the scale of the problem, the trajectory from online violence to offline harm, and the implications for women human rights defenders, activists, and journalists. Finally, it outlines key pathways forward, emphasizing legislative and regulatory action, accountability frameworks, multistakeholder cooperation, and the potential role of AI itself in preventing and responding to VAWG.

AI and the Intensification of Technology-Facilitated Violence Against Women and Girls

Technology-facilitated violence against women and girls is not a brand-new phenomenon.1UN Women. 2025. How AI is exacerbating technology-facilitated violence against women and girls, https://www.unwomen.org/en/digital-library/publications/2025/12/how-ai-is-exacerbating-technology-facilitated-violence-against-women-and-girls. Long before the emergence of generative AI, women were disproportionately targeted by online harassment, threats, doxing, stalking, impersonation, and non-consensual sharing of intimate images. What distinguishes the current moment is the way AI has amplified these harms, making them more scalable, more anonymous, more convincing, and more difficult to detect and redress.

Disinformation and misinformation are among the most prevalent forms of technology-facilitated violence experienced by women. These tactics are frequently used to undermine women’s credibility, reputation, and authority, particularly when they occupy visible roles in public, political, or professional life. One study found that 65% of women surveyed had experienced or witnessed disinformation-based abuse, highlighting the pervasiveness of this form of violence. AI systems now enable the rapid generation and dissemination of false narratives, manipulated images, and fabricated content at unprecedented speed and scale, intensifying the impact of such attacks.

Image-based abuse represents one of the most alarming manifestations of AI-driven harm. Advances in generative AI have dramatically lowered the barriers to producing hyper-realistic deepfake images and videos, most notably non-consensual pornographic content. Recent studies estimate that 98% of all deepfake content online is non-consensual and pornographic, and that 99% of those depicted are women. This form of abuse is uniquely damaging, combining sexual violence, reputational harm, and psychological trauma, often with long-lasting consequences for victims’ personal and professional lives.

Beyond deepfakes, AI has intensified a range of other abusive practices, including doxing, violent threats, sextortion, scamming, hacking, impersonation, and stalking. Automated tools can scrape personal data, generate targeted threats, or impersonate individuals with high levels of realism, creating new vectors for coercion and intimidation. These harms are compounded by the difficulty of tracing perpetrators and the slow or inconsistent responses of platforms and legal systems.

A Tipping Point: Online Violence and the Shrinking of Women’s Public Space

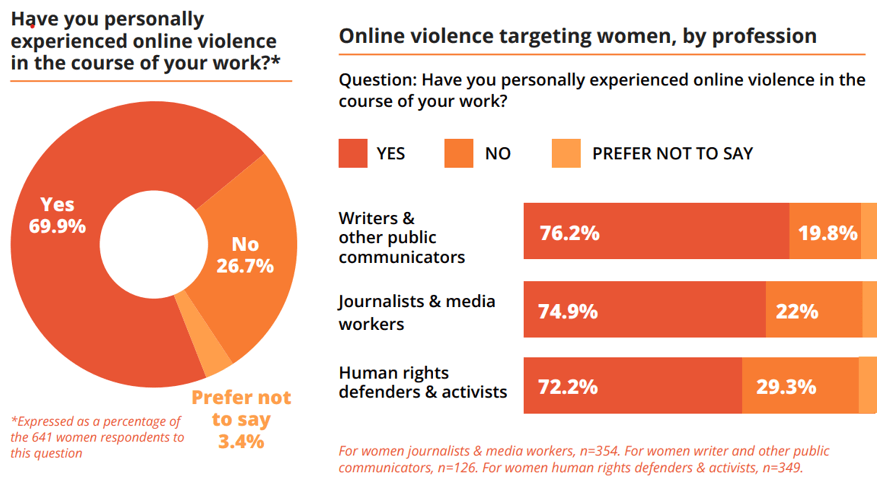

The current trajectory suggests that technology-facilitated violence against women and girls is approaching a tipping point. UN Women’s recent global study that surveyed women on their experiences of online violence in the public sphere reveals that the scale, severity, and normalization of abuse are increasingly shaping who can safely participate in public discourse. For many women, especially those from marginalized communities, online spaces have become sites of persistent hostility rather than opportunity.

Figure 1. Online violence against women in the public sphere2UN Women. 2025. Tipping point: The chilling escalation of online violence against women in the public sphere, https://www.unwomen.org/en/digital-library/publications/2025/12/tipping-point-the-chilling-escalation-of-violence-against-women-in-the-public-sphere-in-the-age-of-ai.

This escalation has profound implications for democratic participation, freedom of expression, and gender equality. Online violence functions as a powerful silencing mechanism, pushing women to self-censor, withdraw from public engagement, or avoid leadership roles altogether. When threats and abuse are amplified through AI-driven disinformation campaigns or sexually explicit deepfakes, the costs of visibility become intolerably high.

The chilling effect extends beyond individual targets. When women journalists, activists, and political leaders are attacked, the messages they carry — and the constituencies they represent — are also undermined. Online misogyny and TF VAWG are key drivers of and often a gateway to radicalization and violent extremism.3United Nations General Assembly. 2024. Intensification of efforts to eliminate all forms of violence against women and girls: Technology-facilitated violence against women and girls; Baekgaard, K. 2024. “Technology-Facilitated Gender-Based Violence.” Georgetown Institute for Women, Peace and Security. 18 June. In this sense, AI-enabled violence against women is not only a gender issue but a structural threat to inclusive governance, human rights, and social cohesion.

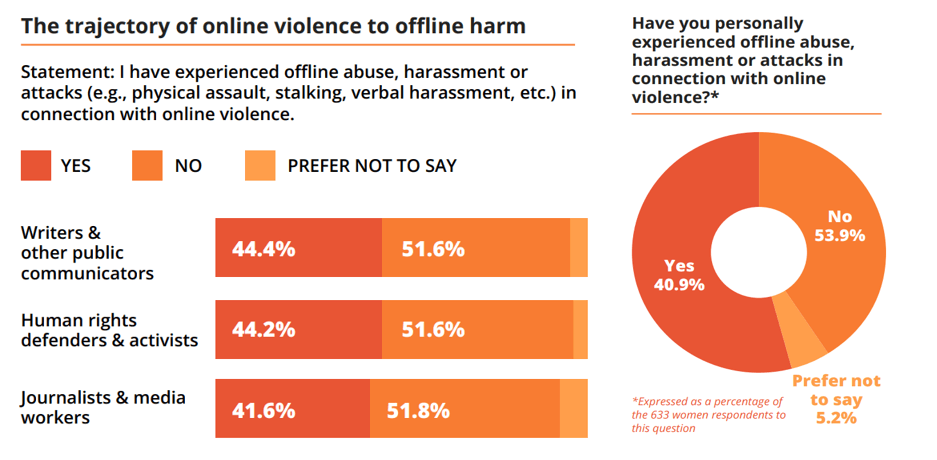

Understanding the Scale and Trajectory of Harm

Data on technology-facilitated violence against women and girls reveals both the magnitude of the problem and its evolving nature. While online abuse has long been dismissed as less serious than physical violence, emerging evidence demonstrates clear pathways from digital harm to offline consequences. Online threats can escalate into stalking, physical attacks, or coordinated harassment campaigns that disrupt women’s livelihoods and safety.

Figure 2. The trajectory of online violence to offline harm4Ibid

AI plays a critical role in accelerating this trajectory. Automated systems can replicate abuse across platforms, generate thousands of messages or images, and sustain campaigns over time with minimal human effort. The persistence and omnipresence of AI-enabled abuse mean that victims often experience harm as continuous and inescapable, blurring the boundaries between online and offline life. Moreover, the realism of AI-generated content complicates responses by law enforcement, employers, and the public. Deepfake images and videos can be difficult to disprove, particularly in contexts where gender stereotypes and misogynistic narratives already undermine women’s credibility. The result is a heightened risk of secondary victimization, as survivors are forced to defend themselves against fabricated evidence while navigating legal and social systems ill-equipped to respond.

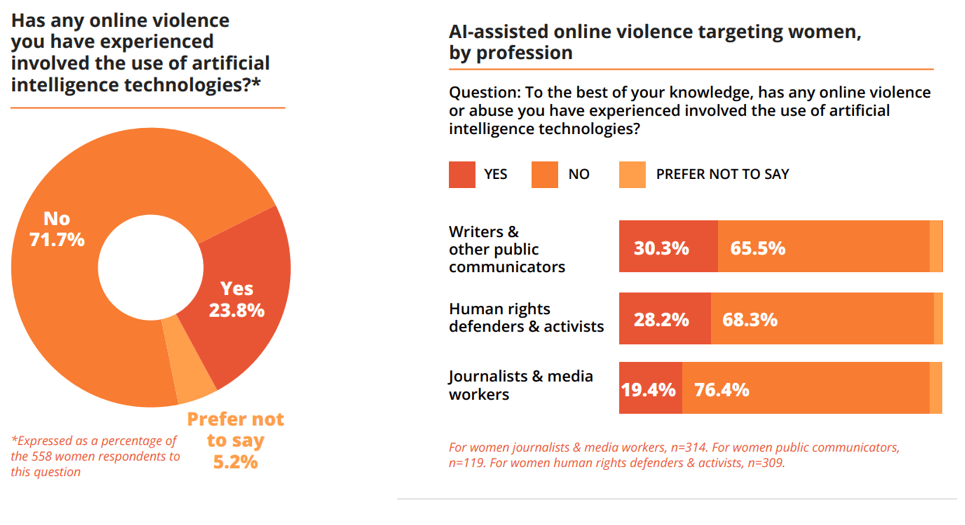

Disproportionate Impact on Women Human Rights Defenders, Activists, and Journalists

Women human rights defenders, activists, and journalists are among those most acutely affected by AI-enabled violence. Their work often challenges powerful interests, entrenched norms, or dominant narratives, making them frequent targets of coordinated attacks. AI-driven disinformation campaigns can be used to discredit their work, question their integrity, or portray them as threats to social order.

Figure 3. The role of AI in women human rights defenders,’ activists,’ and journalists’ experience of online violence5UN Women. 2025. Guidance for police on addressing technology-facilitated violence against women and girls. Available at: https://www.unwomen.org/en/digital-library/publications/2025/11/brief-guidance-for-police-on-addressing-technology-facilitated-violence-against-women-and-girls

For women in public life, deepfake pornography and impersonation pose particular risks. Fabricated images or messages can be circulated to damage reputations, undermine professional standing, or incite harassment from audiences.

Further, AI tools can be used to monitor online activity, map social networks, or identify vulnerabilities, increasing the risks associated with advocacy and mobilization. For many women, especially those operating in restrictive or hostile environments, the digital sphere has become another front line of struggle rather than a space of empowerment.

Normative Frameworks and Emerging Global Standards

In response to the growing recognition of technology-facilitated violence against women and girls, significant normative developments have emerged at the international level. These frameworks increasingly acknowledge that digital and AI-enabled harms are forms of gender-based violence that require the same seriousness, prevention, and accountability as offline abuse.

Recent normative advances emphasize states’ obligations to protect women and girls from violence in all its forms, including those facilitated by technology. They call for gender-responsive approaches to digital governance, human rights-based regulation of AI, and stronger accountability mechanisms for both state and non-state actors. Importantly, these frameworks recognize that the design, deployment, and governance of AI systems are not neutral processes but reflect social values and power relations.

Global standards also highlight the importance of intersectionality, acknowledging that women’s experiences of AI-enabled violence are shaped by race, ethnicity, disability, sexual orientation, and other factors. This perspective is essential for developing responses that are inclusive, effective, and grounded in lived realities.

Legislative and Regulatory Pathways Forward

Addressing the impact of AI on violence against women and girls requires robust legislative and regulatory action. One key priority is the adoption of frameworks that prioritize safety by design. AI systems should be required to undergo rigorous assessments to ensure they do not reproduce or amplify gender bias, discrimination, or harm. Treating AI as a sector that poses potential risks to public safety — rather than as an exceptional or self-regulating domain — is essential to avoiding harm at scale.

Accountability frameworks are equally critical. Clear legal standards should define the responsibilities of developers, deployers, and platforms in preventing and responding to AI-enabled violence. Survivors must have access to effective remedies, including content removal, redress mechanisms, and justice processes that recognize the specific harms associated with AI-generated abuse.

Regulation should also address transparency, requiring companies to disclose how AI systems are trained, how content is moderated, and how risks are identified and mitigated. Without transparency, it is impossible to assess whether commitments to safety and human rights are meaningful or merely rhetorical.

The Role of Self-Regulation and Structured Engagement

While legislation is essential, it cannot operate in isolation. Structured engagement among governments, the technology sector, and civil society is necessary to develop practical, context-sensitive solutions. In Singapore, for instance, the government launched the Digital for Life Movement in 2021 to promote digital inclusion, literacy, and safety through partnerships with government agencies, schools, community partners, and technology companies. Self-regulatory measures based on good practices — such as codes of conduct, content moderation protocols, and transparency reporting — can play a complementary role, particularly in rapidly evolving technological environments.

However, self-regulation must be grounded in accountability and informed by the expertise of women’s rights organizations and survivors. Too often, platform policies are developed without meaningful input from those most affected by online abuse, resulting in gaps between policy intent and lived experience. Structured engagement mechanisms can help bridge this divide, ensuring that safety measures are responsive to real-world harms. One example is the Global Women’s Safety Expert Group established by Meta to inform the development of its policies, tools, and resources.

Multistakeholder Cooperation and the Role of AI in Prevention

Multistakeholder cooperation is central to any effective response to AI-enabled violence against women and girls. Governments, international organizations, the private sector, and civil society each have distinct roles to play, and collaboration is essential to align incentives, share knowledge, and scale effective interventions.

At the same time, AI itself can be harnessed as part of the solution. There is growing interest in the use of AI and digital tools for positive social change, including the prevention of and response to VAWG. Examples include chatbots that provide information and support to survivors, AI-enabled safety tools, and early-warning systems that identify patterns of abuse.6UN Women. 2025. How AI is exacerbating technology-facilitated violence against women and girls; Khan, S. 2023. “How ai exacerbates online gender-based violence.” Organization for Ethical Source.

Initiatives that bring together technology developers and women’s safety experts are particularly promising. Co-creating evidence-based solutions can help ensure that technological innovation aligns with human rights principles and survivor-centered approaches. Capacity-building efforts, such as AI training programs and innovation labs focused on gender equality, can further strengthen this ecosystem.

Conclusion: Towards a Safer, More Equitable Digital Future

The impact of AI on violence against women and girls represents one of the defining gender equality challenges of the digital age. As AI technologies continue to evolve, so too do the forms of harm they enable. Disinformation, deepfake abuse, and AI-driven harassment are not peripheral issues but central threats to women’s rights, safety, and participation in public life.

Responding to these challenges requires a comprehensive approach grounded in human rights, gender equality, and accountability. Legislative and regulatory action, normative development, structured engagement with the technology sector, and multistakeholder cooperation must work in tandem. Crucially, women and girls — and those who defend their rights — must be at the center of these efforts, not merely as beneficiaries but as co-creators of solutions.

Digital platforms and AI systems operate across borders, allowing perpetrators of technology-facilitated violence against women and girls to exploit gaps between legal frameworks and enforcement systems, which makes shared standards and transnational cooperation essential. Emerging global initiatives — including the UN High-Level Advisory Body on AI, the UN Global Digital Compact, and the Council of Europe’s Framework Convention on AI — provide promising foundations through common principles on accountability, transparency, risk assessment, and safeguards. Ensuring these efforts systematically integrate a gender perspective and prioritize ending TF VAWG will be critical to their effectiveness. AI does not have to entrench inequality or violence. With deliberate, informed, and inclusive governance, it can be steered toward a future that enhances safety, amplifies women’s voices, and supports the prevention and elimination of violence against women and girls. The choices made now will shape whether AI becomes a force for harm or a tool for justice in the years to come.

Notes

- 1UN Women. 2025. How AI is exacerbating technology-facilitated violence against women and girls, https://www.unwomen.org/en/digital-library/publications/2025/12/how-ai-is-exacerbating-technology-facilitated-violence-against-women-and-girls.

- 2UN Women. 2025. Tipping point: The chilling escalation of online violence against women in the public sphere, https://www.unwomen.org/en/digital-library/publications/2025/12/tipping-point-the-chilling-escalation-of-violence-against-women-in-the-public-sphere-in-the-age-of-ai.

- 3United Nations General Assembly. 2024. Intensification of efforts to eliminate all forms of violence against women and girls: Technology-facilitated violence against women and girls; Baekgaard, K. 2024. “Technology-Facilitated Gender-Based Violence.” Georgetown Institute for Women, Peace and Security. 18 June.

- 4Ibid

- 5UN Women. 2025. Guidance for police on addressing technology-facilitated violence against women and girls. Available at: https://www.unwomen.org/en/digital-library/publications/2025/11/brief-guidance-for-police-on-addressing-technology-facilitated-violence-against-women-and-girls

- 6UN Women. 2025. How AI is exacerbating technology-facilitated violence against women and girls; Khan, S. 2023. “How ai exacerbates online gender-based violence.” Organization for Ethical Source.